Insights

Read time: 5 min

Arnon Shimoni

✓ Expert opinion

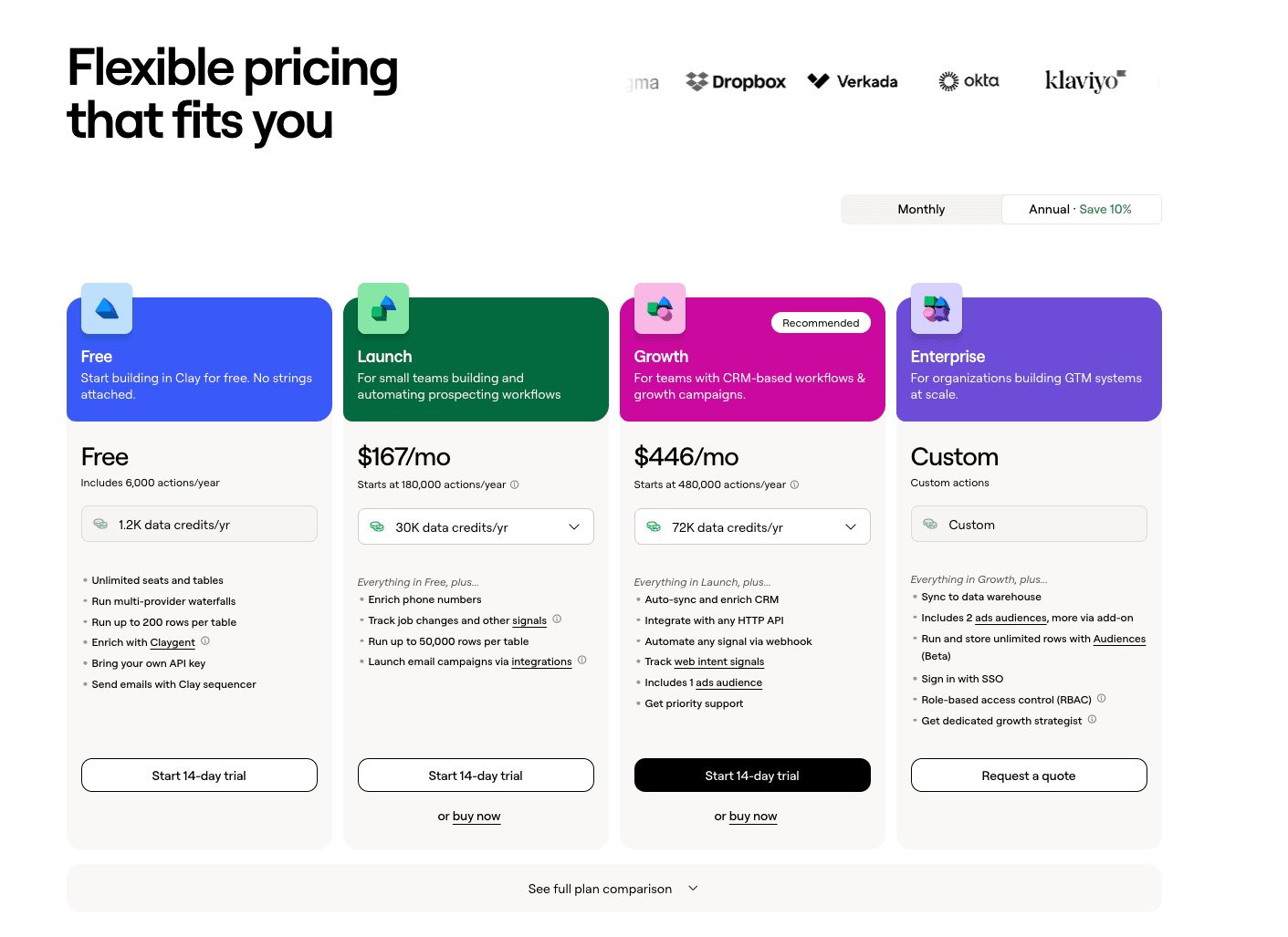

Clay announced a pricing overhaul this month. They split pricing into two axes: data credits for cost, actions for platform value. AI model costs pass through at 0% markup and data credit prices dropped 50–90%.

At face value it's a competitive move with cheaper credits and simpler plans, but underneath it's much more than a discount. Clay decided their margin lives in the platform instead of the tokens, so they zeroed out AI inference margin entirely to make that point.

More companies are making the same bet, and the billing infrastructure to support it is different from what most teams have today.

What platform + tokens actually means in 2026

The model has two components doing different jobs.

The platform fee covers access, features, workflows, meaning the value the product creates - and this is where margin lives. Clay calls it "actions", Snowflake calls it compute credits, while Vercel charges for bandwidth and builds. The platform is the differentiated part, and that's exactly the thing that's hard to replicate.

The token layer passes through AI inference costs at minimal or zero markup. PostHog charges 20% and states it plainly. Clay charges 0%. The reasoning here is that AI inference is a commodity. Competing on token price goes one direction, but competing on platform value doesn't.

Snowflake got here before AI made it necessary. They separated storage and data transfer, with costs that largely pass through separated from compute and cloud services where they really take margin. That separation gave them a good and pretty consistent answer to "what are we paying for" that held when infrastructure costs moved around.

You pay for the car lease. You pay for fuel as you drive. The car company doesn't make money on petrol.

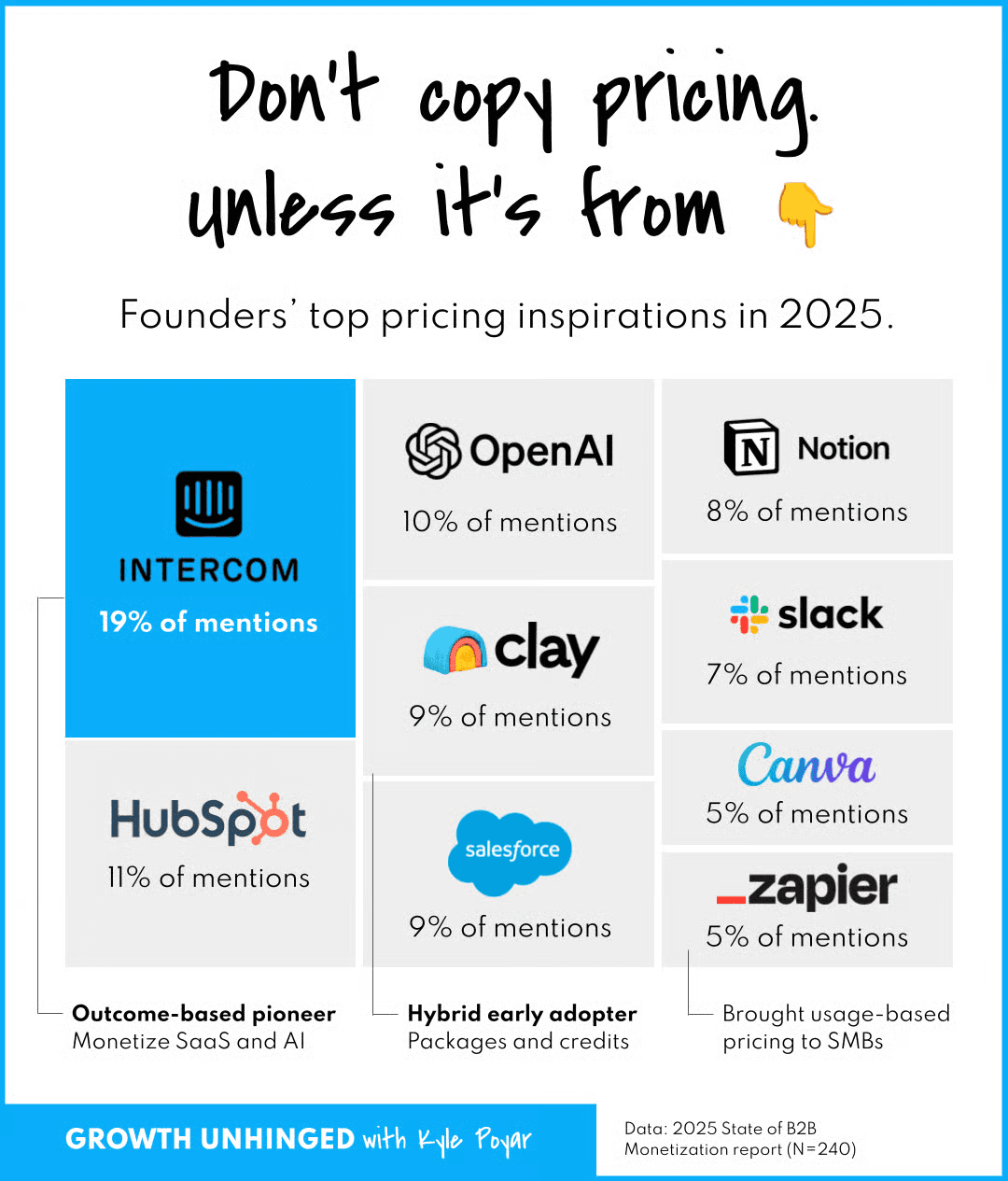

Why other companies are converging on the same model

If you read Growth Unhinged (you really should), you'd also know that Figma introduced AI credits in December 2025. I'll paraphrase Kyle here - but Figma didn't enforce the rules on credits for three months, then used the consumption data to calibrate before billing anyone. What they found was that a tiny subset of their users were consuming far above their allocation and credits were the answer to that distribution.

In Figma's implementation, some credits are value-based (flat cost per prototype), some are cost-based (variable by model selected) and users end up deciding whether Gemini 3 Pro is worth 3x the credits of a cheaper model. That's a cost/yield optimization problem Figma transferred to their customers.

Not my favourite, but whatever.

PostHog on the other hand solved this more cleanly with direct pass-through at 20% markup. Transparent, predictable, separated from core product value. They have ten other products to charge for, AI is infrastructure and not the main business.

Clay's version is definitely the most aggressive we've seen with no markup on models. The bet is that GTM workflow complexity grows faster than token costs commoditize. If that's right, their margin expands with usage. If it's wrong, they've built a very good data marketplace with no margin on the expensive part.

The architecture problem

Every one of these companies ran a billing infrastructure project to make these changes possible.

Clay's split between data credits and action credits requires two separate metering systems, two wallet types, and rate cards that update independently. The 0% markup on AI models means their stack has to track actual inference costs per customer in real time, separate from platform usage. That's not a configuration change. That's a ledger architecture decision.

Figma's variable credit cost by model requires the billing layer to know which model ran for each action and apply a different rate. PostHog's clean 20% markup works partly because they chose simplicity as an architectural constraint — one percentage, full pass-through, no per-model complexity. That choice has infrastructure implications too.

Our experience at Solvimon shows the primary blocker to hybrid pricing adoption isn't strategy, but implementation. Bain's research corroborates it, and shows 65% of SaaS companies adding AI adopted hybrid models but a significant share delayed or abandoned pricing changes because their billing stack couldn't support what they wanted to run.

Clay, Figma, PostHog, and Snowflake all had the infrastructure to run these models. The announcement is what customers see, but the infrastructure project is what made it possible.

The question this raises for your pricing

If AI inference costs drop 80% over the next two years (which is the direction the market is moving) what happens to your revenue?

If your pricing is token-based with a margin baked in, your revenue compresses with the cost curve. You're selling a commodity at a margin that shrinks as the commodity gets cheaper.

If your pricing is platform-based with tokens as a pass-through, your revenue is decoupled from the cost curve. Customers benefit from cheaper inference. Your platform margin stays intact.

Clay's 0% markup on AI models is definitely a serious statement about which side of that equation they want to be on, and they're betting customers will pay for workflow complexity instead of compute. For infrastructure to support that bet, you need to take a look at what's below:

Two wallet types

Real-time cost tracking per customer

Rate cards that can be updated without a deploy - and the ability to change the platform price independently of the token price.

We know most billing v1 stacks (things like Stripe plus custom code, or homegrown systems built before hybrid pricing was the default) can't do this without significant engineering work.

The pricing strategy is now the easy part (you can copy Clay!), but the architecture that makes it executable is where most teams are still stuck.

Solvimon was built to handle exactly this with separate wallets for platform and token costs, real-time metering, and rate cards that finance can update without filing an engineering ticket. If you're designing a platform + tokens model and want to know what the infrastructure needs to support it, talk to us.

Ready for billing v2?

Solvimon is monetization infrastructure for companies that have outgrown billing v1. One system, entire lifecycle, built by the team that did this at Adyen.